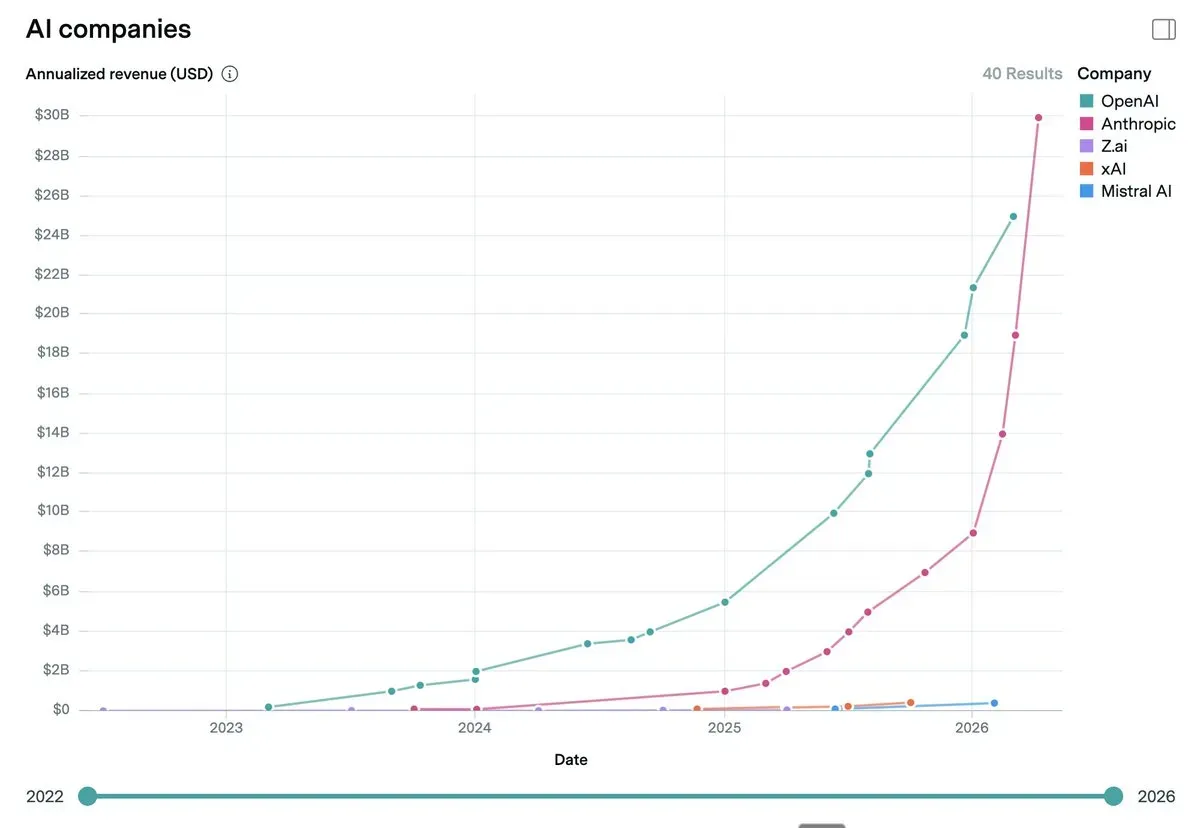

Once upon a time, there was a security-obsessed startup founded by former OpenAI employees worried about the risks of artificial intelligence. Within two years, that same company overtook the most famous AI organisation on the planet in terms of turnover. Anthropic reached an ARR of over 30 billion dollars, compared to OpenAI‘s 25 billion. Thirty times its size in just 15 months. The leap from 9 billion to 30 billion took place in four months.

The company story behind these numbers is even more brutal. In February 2026, at the time of the Series G funding round, Anthropic had over 500 enterprise customers each spending more than $1 million a year. Today that number has surpassed 1,000, doubling in less than two months. Not freemium users, not individual subscriptions: serious companies with solid contracts that renew and expand. The products that have driven this acceleration are clear: Claude Cowork has transformed the way professional teams operate, while Claude Code has become the digital chief of staff for thousands of operators. Anthropic’s ARR is now higher than that of all but 129 companies in the S&P 500 index. A company that still did not generate significant revenues at the beginning of 2024.

But the real announcement this week has a name that is both poetic and disturbing: Project Glasswing. Anthropic has launched a computer security coalition that includes Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA and Palo Alto Networks. At the heart of the project is Claude Mythos Preview, a frontier model not yet publicly available that has already identified thousands of zero-day vulnerabilities in every major operating system and popular web browser. A 27-year-old flaw in OpenBSD, reputed to be one of the world’s most secure operating systems, discovered within minutes. A 16-year-old bug in FFmpeg, never intercepted in five million automated tests. A chain of vulnerabilities in the Linux kernel that allowed an attacker to scale up to complete control of the machine.

The benchmarks speak for themselves: Claude Mythos scores 93.9 per cent on SWE-bench Verified, 83.1 per cent on CyberGym for reproducing vulnerabilities, and 82.0 per cent on Terminal-Bench 2.0. Numbers that, to make a direct comparison, exceed the previous Opus 4.6 model by 15-25 percentage points. Anthropic pledged 100 million dollars in usage credits for project partners, plus 4 million in direct donations to open source organisations such as the Apache Software Foundation and OpenSSF.

The name chosen for the project is not accidental. Greta oto, the butterfly with transparent wings, is hiding in plain sight, just like the vulnerabilities that Mythos detects in the code that billions of people use every day. Transparency as a method, not as marketing. Anthropic has shared the cryptographic hashes of the discovered flaws before they are made public, pending vendor patches. Responsible disclosure in a technical and cultural sense.

The real news is not that Anthropic has more money than OpenAI. The real news is that the company founded on the idea that AI should be ‘safe and beneficial’ is proving that security and growth are not contradictory, and that as models become capable of hacking into any computer system on the planet, having a company obsessive about safety controlling them is the only thing standing between us and global digital chaos. Sleep soundly, but with one eye open.

For more, see the link to the project,

while here is the link to the official paper.